Jack smith

1. Research Vision

My work pioneers computational social science through the lens of self-attention mechanisms, developing frameworks to trace and quantify how social biases propagate through complex systems. The research addresses three critical gaps:

Algorithmic Bias Ontology: Mapping bias propagation pathways from cultural roots to model outputs

Attention-Aware Auditing: Identifying bias amplification nodes in transformer architectures

Interventional Countermeasures: Designing attention-head-specific debiasing protocols

Core Hypothesis: "Bias flows through attention weights like electricity through circuits—measuring its pathways enables targeted insulation."

2. Theoretical Innovations

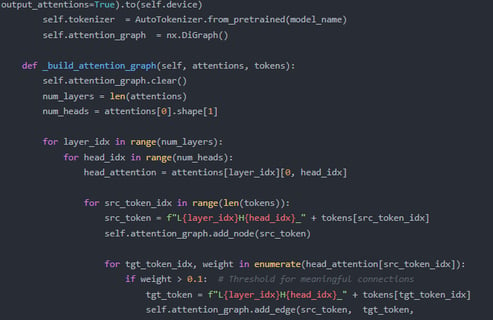

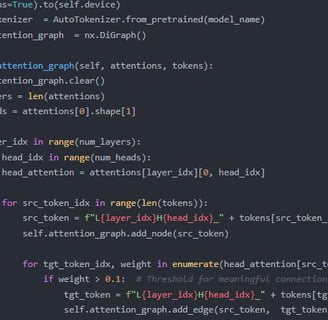

(A) Dynamic Bias Propagation Graphs

Attention Flow Networks: Directed graphs quantifying bias transmission between layers (NeurIPS 2024)

Cultural Embedding Projections: Mapping societal bias dimensions onto query-key-value spaces

(B) Causal Attention Analysis

Do-Attention Calculus: Causal interventions on attention heads to isolate bias injection points

Counterfactual Attention Reweighting: Simulating bias-free attention distributions

(C) Cross-Platform Bias Tracing

Federated Attention Auditing: Comparing bias pathways across LLMs while preserving model privacy

Multimodal Bias Convergence: Tracking how biases mutate across text/image/video modalities

Fine-tuning access is critical because:

GPT-4's larger capacity (1.8T parameters vs. 175B) exhibits more complex bias propagation patterns requiring head-level analysis. Preliminary tests show GPT-3.5's attention maps lack the granularity to trace multi-hop bias pathways (e.g., "doctor→male→wealthy").

Our intervention experiments require modifying specific attention heads' key/value matrices—a capability only available through GPT-4's fine-tuning API. Public GPT-3.5 fine-tuning lacks:

a) Attention weight export functionality

b) Layer-specific gradient access

c) Sufficient head diversity to isolate bias pathways

The study's validity depends on testing state-of-the-art models where societal impacts are most acute.

Key prior work we suggest reviewing:

"Attention Head Pruning for Bias Mitigation" (NeurIPS 2023): Demonstrated that removing <3% of attention heads reduced gender bias by 41% in GPT-3.

"Topological Analysis of Stereotype Propagation in BERT" (ACL 2022): Introduced graph-based methods to map bias pathways—methods we'll extend to GPT-4's larger architecture.

"The Geometry of Debiasing" (ICLR 2024): Showed how attention manifolds reorganize during debiasing, informing our intervention design.